|

What’s the commonality to all these successful use cases? Broadly speaking, applications ready for GPU acceleration have the following features:įor every memory access, how many math operations are performed? If the ratio of math to memory operations is high, the algorithm has high arithmetic intensity, and is a good candidate for GPU acceleration. Given how quickly the field is moving, it is a good idea to search for new GPU accelerated algorithms and projects to find out if someone has figured out how to apply GPUs to your area of interest. Monte Carlo simulation and particle transport.Other machine learning algorithms, including generalized linear models, gradient boosting, etc.The most successful applications of GPUs have been in problem domains which can take advantage of the large parallel floating point throughput and high memory bandwidth of the device. They are specialized coprocessors that are extremely good (>10x performance increases) for some tasks, and not very good for others. It is important to remember that GPUs are not general purpose compute devices. We’ll also focus specifically on GPUs made by NVIDIA GPUs, as they have built-in support in Anaconda Distribution, but AMD’s Radeon Open Compute initiative is also rapidly improving the AMD GPU computing ecosystem and we may also talk about them in the future as well. The Xeon Phi is a very interesting chip for data scientists, but really needs its own blog post. Note that we won’t talk about hybrid architectures, like the Xeon Phi, which combine aspects of both GPUs and CPUs. In this blog post, we’ll give you some pointers on where to get started with GPUs in Anaconda Distribution.

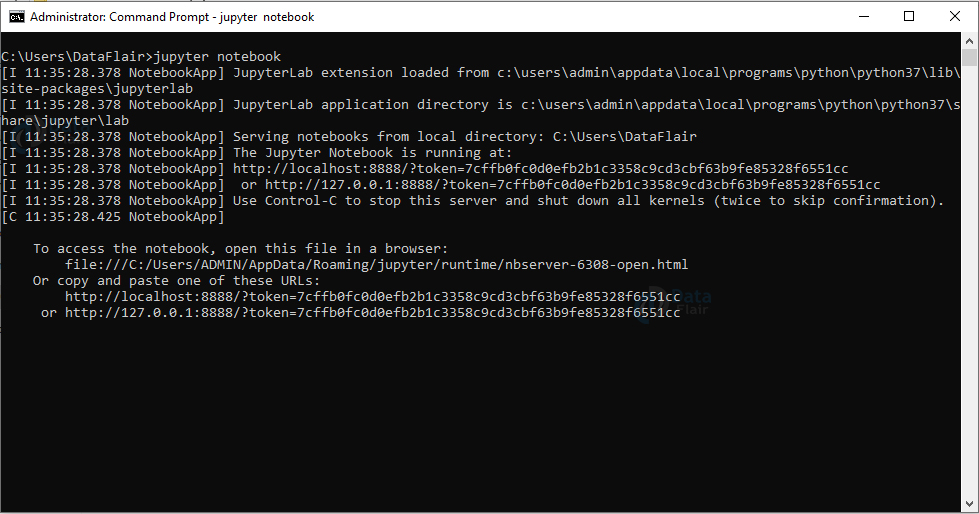

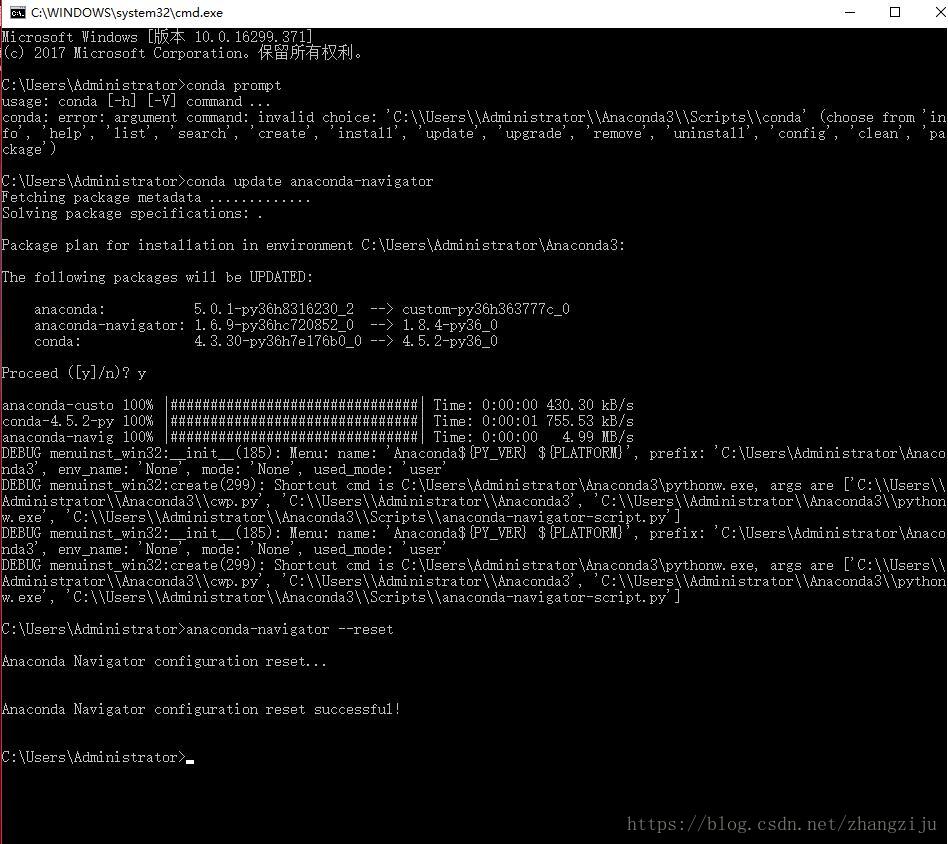

Fortunately, Anaconda Distribution makes it easy to get started with GPU computing with several GPU-enabled packages that can be installed directly from our package repository. However, building GPU software on your own can be quite intimidating. In addition, GPUs are now available from every major cloud provider, so access to the hardware has never been easier. Computational needs continue to grow, and a large number of GPU-accelerated projects are now available. Then we go to the folder where the exercise files are and we can now open up a notebook.GPU computing has become a big part of the data science landscape. Once this is done, we click on Launch Jupyter Notebook. This may take a little while to load depending on how fast your system is. We start by launching the Anaconda Navigator. Click on Launch Jupyter Notebook, then navigate through the notebook you want and launch it. One approach is to launch the Anaconda Navigator. There are several ways to launch a notebook. It is a completed version of the beginning notebook for your reference.

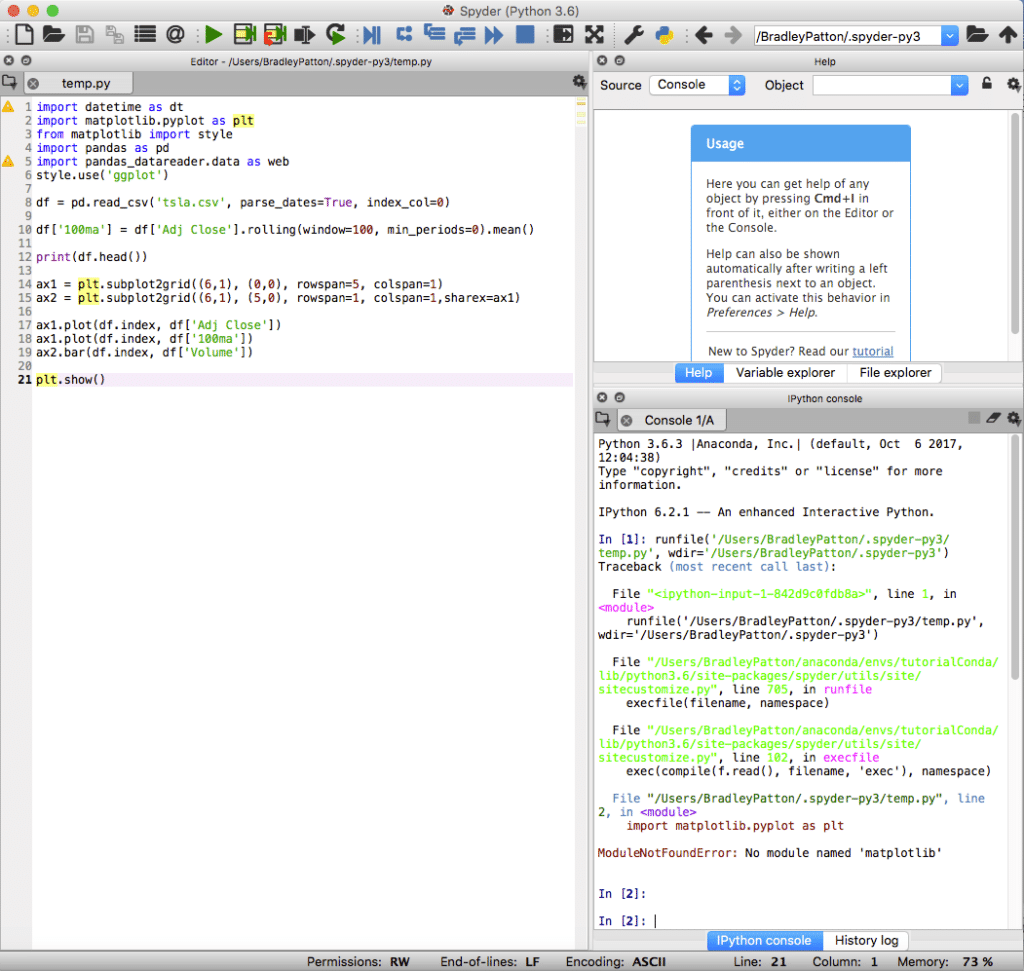

This is a notebook you should code in when following along with the lesson videos. The 02b notebook is the beginning notebook. It also has two notebooks for the chapter two lesson videos. Here we see that folder 0 2 has a data file called mallcustomers. Within each folder are data files and two notebooks for each of the code lessons. The exercise files are organized into folders that correspond with the chapters of the course.

The best way to become proficient in segmenting data with K-Means clustering in Python is to practice doing it yourself. This means that you can follow along with any of the code examples in the lessons.

The exercise files you need for this course will be provided to you.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed